Here at Panintelligence, we regularly host discussions on tech. As a company rooted in data, we love to explore the benefits and challenges surrounding artificial intelligence, machine learning and algorithms.

In our recent webinar ‘AI Friend or Foe?’, Ken Miller CTO of Panintelligence and Zandra Moore, CEO of Panintelligence invited Denis Dokter, Relationship Officer at Nexus Leeds, and Nik Lomax, Associate Professor in Data Analytics for Population Research, to discuss questions of ethics around the applications and future of AI.

In this article, we pull out some of the most interesting points that were discussed. You can also watch the full video discussion below:

The Ethics of AI

When we say ‘technology’ people often think of high-tech gadgetry: advanced electronics, computer systems, space travel. But every tool that humans have ever invented, was at one point ‘high tech’. Humans are inherently connected to technology and we continually seek to improve our lives through the building of tools.

Once upon a time, the metal axe was the latest in tree-felling technology, enabling much faster and more accurate cutting than its predecessor: the stone axe. The wooden wheel was the latest in travel-tech when it first appeared and revolutionised how people could transport themselves and materials.

The internet was a huge leap forward, enabling information to be shared and accessed easily, instantly, cheaply, and globally. It has empowered more people to learn more things than ever before. But the internet can and has also been used to disseminate destructive information and incite conflict too. Despite this, few would argue that the internet was on balance, a bad thing.

Without all of humanity’s accumulated technological advancements, we’d still be sitting in the mud. This is not to say that every piece of technology created by humans has been used only for good; inevitably some technology had unexpected negative consequences, and some is created explicitly for destruction (nuclear weapons for example). But advances in technology have for the most part brought positives to humankind and greatly raised the standard of living.

Rise of the Machines?

Although much sci-fi has been written about the ‘rise of the machines’ and the creation of a sentient intelligence that eventually enslaves or destroys mankind, the future is likely to be far less dramatic.

Even The Terminator was designed by humans; someone has to write the code. Artificial Intelligence is simply another tool and just like the internet or the axe, tools can be used for good or bad.

AI is just an automation of a human process. It allows us to do very repetitive things, much faster and more accurately. A person cannot look through 1000s of pictures in seconds and identify important information and patterns, but AI can.

And of course, AI will develop further. Some forms of technology can take many years to reach mass adoption. Tablet computers were in use for many years before Apple released the ipad and generated mass adoption. The same happened with smartphones.

Sometimes, however, the opposite can happen; Google Glass was very positively received at first, but privacy became a big issue and what was expected to be a global game-changer, was killed off and relegated to a niche product. It’s quite possible however, that the Google Glass concept will reach mass-market adoption when the time is right.

The Role of National Governments in AI and ML

As AI and ML become more widespread, governments around the world are starting to create bodies to ensure the technology is used in the right way. The UK has a government office for AI, which is striving to create a national AI strategy. Germany, France and the USA too, are all announcing billion-dollar investments in AI infrastructure.

AI is a driver of innovation – from very simple and mundane things – for example filtering email spam from your inbox, to exciting and extremely complex areas like self-driving cars and cancer screening. It’s just a toolbox. And those tools can be implemented very well, or very badly.

Some companies are implementing AI and ML for the wrong use cases. For example, early chat bots were – and sometimes still are – incredibly frustrating. They were an example of the right intentions but the wrong application. We are learning that trying to mimic a human is generally not the best use of AI – yet. Now chat bots are more commonly used as a ‘triage’ tool – filtering customer requests to get them to the right human support agent.

There is certainly an opportunity for AI and ML to be used negatively. The UK government is currently looking for evidence on where AI is being used to reduce competition or harm consumers; for example using algorithms for price-fixing, discrimination or fake reviews. Without the right regulation, AI can be turned in to a foe.

A good intention can also lead to a bad unexpected consequence. We have many examples of how noble ideas in tech which were created with entirely good intentions, have led to negative outcomes further down the line. Facebook and AirBnb are two examples of such technologies that were created for good, but became so big and dominant that new, unforeseen problems emerged later.

Just like other technology, whether AI is friend or foe also depends on your perspective. For the cave man who just invented the bow and arrow, his high-tech invention is a friend indeed. For the rival tribe who are yet to discover it, this new weaponry is very much a foe.

Does AI need to be perfect? The case for self-driving cars

We seem to have an expectation of AI to be perfect, yet we as the creators of AI are imperfect. Hence its should not come as a surprise that there will be inherent mistakes and problems in any AI that is created by humans. Humans have to fail in order to learn.

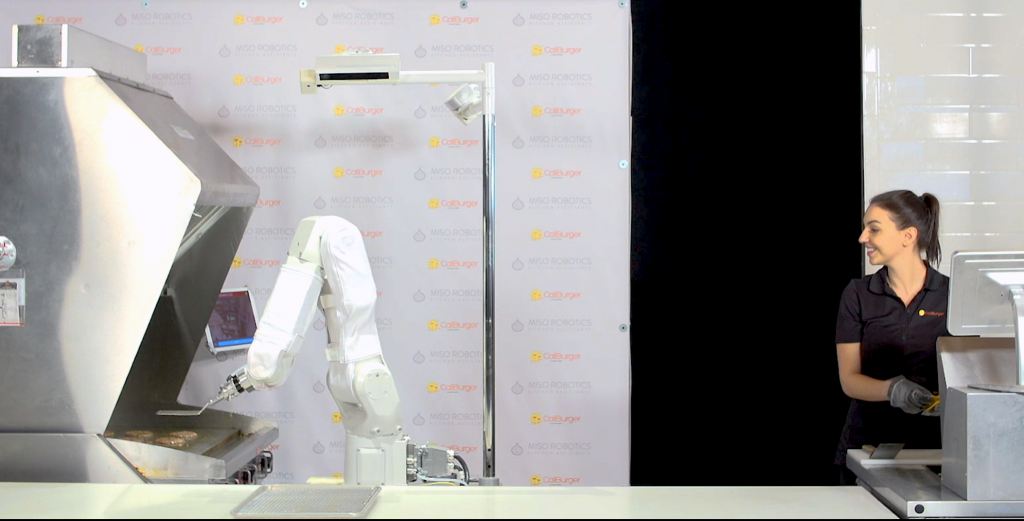

The mainstream media often like to highlight examples of AI tech that make us uncomfortable or have gone wrong. For example, Flippy - the burger-flipping robotic arm that replaces a human cook, or a walking robot that keeps falling over.

Flippy the burger-flipping robot. Image: Miso Robotics

It highlights such examples as reasons why robots won't or shouldn't replace humans. But burger flipping and walking are perhaps not the best applications of AI – at least not right now. Instead, processing vast volumes of data and drawing valuable insights from such data, are where AI and ML really excel and assist us.

Two common arguments against autonomous driving are that there have been several accidents involving self-driving cars, and also that humans like driving therefore don’t want to stop. But a human liking something becomes irrelevant after a technology delivers a clear benefit – certainly when lives are at stake.

It is not necessary for the AI to be 100% perfect. All that is required is for self-driving cars to be – on average – significantly safer than human drivers. Once the tech reaches that stage, it becomes very hard for society to justify humans driving cars – and thereby killing and injuring more humans – just because they like driving.

So – we can see that AI, ML and algorithms can bring immense benefits to society, as long as they are used for the right things, in the right way. There are many discussions to be had around what the right way is – and that is what we talk about in part 2 where we explore: ‘AI: Advisor Vs Autocrat?'